Professor emeritus Hoare has passed away at the age of 92. Known to many as C. A. R. Hoare, or Tony Hoare, he was one of the great minds in the domain of software engineering. He received the ACM Turing Award in 1980. Altreonic's history that has it roots in the late 1980's, was essentially based on Hoare's CSP process algebra. CSP stands for Communicating Sequential Processes. Here's my personal road that followed. Note that I am an engineer, not an academic. An engineering approach is to make something that works in practice but following sound principles that academics have developed.

Professor emeritus Hoare has passed away at the age of 92. Known to many as C. A. R. Hoare, or Tony Hoare, he was one of the great minds in the domain of software engineering. He received the ACM Turing Award in 1980. Altreonic's history that has it roots in the late 1980's, was essentially based on Hoare's CSP process algebra. CSP stands for Communicating Sequential Processes. Here's my personal road that followed. Note that I am an engineer, not an academic. An engineering approach is to make something that works in practice but following sound principles that academics have developed.

My initial exposure to software was while studying as an engineer in the domain of electronics and applied mechanics. We had an old IBM-360 and we learned to program in Algol-68 and Fortran. Later, as PCs became affordable, Pascal was added. Meanwhile, Tony Hoare had developed CSP, which later on was adopted as the conceptual framework for a unique processor, called the Transputer (ex-INMOS). At the time it was very different from the mainstream Intel processors. The transputer was a RISC-like CPU with unique support for software and hardware concurrency. It natively supported small processes and inter-processor communication. As it had a small register set and hence context process switching was fast and interprocessor communication had a low latency. For programming the unique occam language was developed. Occam was a direct implementation of a subset of CSP with processes and communication channels.

Just before that time, I had envisioned the concept of a parallel computer (using optical communication). With hindsight, building a parallel computer is easy. What's hard is how to program it in an efficient and scalable way. At that time the focus was on data-parallel programming, think about image processing whereby a large image is split in N chunks and each processor executes the same code on its chunk, providing a theoretical speed-up with a factor N. In reality, "grain size" and "communication latency" determine the unavoidable overhead. The idea was to use the principle of a spreadsheet. References from one cell to another cell can be seen as micro-messages. This worked well and we had the traditional Mandelbrot and equation of Laplace demos.

Around that time, INMOS put its transputer on the market. That was a great opportunity to put the ideas in practice. The development kit had 4 transputers and came with an occam compiler. I bought such an (expensive) kit and that was the start of a long story. First of all, although occam-1 was very simple and even had only 1 datatype (the byte), programming in occam had a steep learning curve to get working parallel programs. The compiler was unforgiving but if you got it compiled, the program would often run flawlessly. The main issue was not to have any deadlocks. After 1 month of error and trial I attended Professor Peter Welch's occam course and on the second day, the mental AHA switch happened. I watched my brain translating the simple exercise specification in "IF-THEN-ELSE" statements and realised that this was wrong. I also realised that this was the consequence being trained in programming using sequential languages whereby one tries to keep track of the whole state-space. The switch to concurrent thinking is to look for the actions in the specifications and then seeing how they are related. Actions become local processes and relationships become communication channels. Once, that mental switch is made, there is no way back. It is the natural way to analyse problems.

I then learned that this is the essence of Hoare's CSP. I summarise it here:

- No Shared State: Processes in CSP do not share memory; they communicate exclusively through explicit, synchronized channels. A process is sequential with descheduling points at channel communication. Each process executes in parallel with other processes.

- Synchronous Communication: A process sending a message (channel writer) must wait until another process receives the message (channel reader), providing built-in synchronization.

- Process Algebra: CSP provides mathematical methods to describe and verify the behavior of complex systems composed of simpler, smaller processes.

- Formalism: CSP uses mathematical modeling to prevent concurrency errors such as deadlocks, livelocks, and infinite overtaking.

Most importantly Hoare's was one of the pioneers who aimed for a Correctness by Construction: The goal of CSP is to enable the design of (concurrent) systems that are guaranteed to be correct through formal mathematical proof rather than just testing.

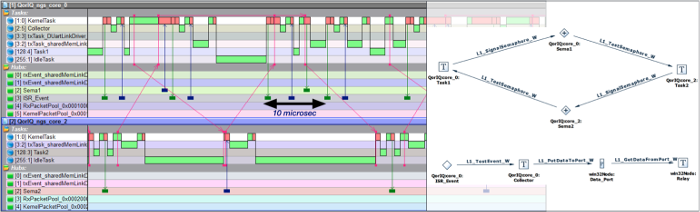

Two options remained: Spin and Leslie Lamport's TLA+. Professor Boute was our coach and TLA+ was selected. To make a long story short (the project took 3 years but was not a full-time activity), while there was a steep learning curve, we ended up doing things a bit differently. TLA+ was used to model and verify the architecture and the semantics, not the source code. That would have been a mistake as software sources evolve. The result was an RTOS kernel that implemented the concept of "Interacting Entities". The entities were mainly "Tasks" (processes in CSP) and the interactions the kernel services connecting the Tasks through intermediate" so-called "Hubs" (channels in CSP) but with much richer semantics, including asynchronous communication. The hubs and the packet switching also played a crucial role in the new architecture. The benefits were scalability, efficiency and maintainability. Unexpectidely, the code size shrank considerably, about a factor 10. The whole kernel now fitted in about 10 to 20 kb (this is very much processor dependent). The project was called "OpenComRTOS" and today lives on under the name VirtuosoNext. The current implementation supports fine-grain space partitioning and even allows to recover in micro-seconds from faults like overflow exceptions. The project was documented in a book (Formal Development of a network-Centric RTOS published by Springer).

The project also opened a road towards trustworthy system design. It enhanced the capabiity to think in abstract terms about systems. In a small electric vehicle, the "Interacting Entities" concept was implemented in the drive-by-wire software of the 4 independent wheels. In the GoedelWorks project we sought to achieve "correct by construction" for systems using meta-modeling of the project entities but also of the development processes to be followed. A key element was the dependency graph that allowed to keep track of the impact of changes. Finally, the ARRL (Assured Reliability and Resilience Level) concept we introduced reflects that the ultimate goal of trustworthy systems engineering is not only to be fault-tolerant, secure or safe but to survive, being able to function as intended whatever happens to it. The safety concern was also the motivation to work with non-traditional cell chemistries at kurt.energy. The illegal state there is a battery fire that can have multiple root causes.

There are muliple lessons that can be drawn from this long road. First of all, formal approaches are a great way to rethink known solutions. Why, because they are a tool helping the mind to think in a more abstract way, away from implementation details. Known solutions often prevent progress as they bias how people look at things. It also helps to think out of the box, questioning established practices. Of course, formal techniques are harder to use (certainly in the beginning) and there is the scalability limit. The way to overcome that is to move to the meta-levels, abstracting away the details. Formalisation is the key. To illustrate this with a small example, in the RTOS a priority-sorted linked list is used everywhere to implement waiting semantics. The first TLA+ models (influenced by the existing source code) also modeled the linked list. The result was that the model checker would not handle the state space explosion. One needs a lot of memory for that. Then it dawned on us that for the semantics of the services, the priority didn't matter and we just replaced it with a Boolean. One bit replacing a complete function. Simple but Smart. A complex solution is often a problem not well understood. But making it simple can take a serious effort even if with hindsight it was trivial. With formalisation and meta-modeling, it easier to think about the core, not the details and better solutions are found.

There are many people who were involved in this roadmap. It were great times working with them as a team. I never programmed anything, even the C-language was never mastered. This was an advantage because it helped to think about behaviour, not about the implementation. The interested reader can browse www.altreonic.com [1]and the internet for papers and presentations.

Eric Verhulst, 16th March 2026